RoVo: Robust Voice Protection Against Voice Cloning Attacks via Embedding-Level Adversarial Perturbations

The implementation and source code of RoVo are publicly available at

https://anonymous.4open.science/r/RoVo_artifact/

Abstract

P roactive defenses against unauthorized voice cloning typically protect shared speech by adding adversarial perturbations to the waveform. While prior methods have improved perceptual quality, efficiency, and deployability, a defense that can be easily weakened or removed by adaptive attack is difficult to regard as practical protection. This robustness gap is especially critical in real-world settings, where attackers can apply modern speech enhancement and purification models before cloning. To address this limitation, we propose RoVo a robust voice protection framework that optimizes adversarial perturbations in the latent space of a neural audio codec rather than directly in the waveform domain. By perturbing codec embeddings and decoding them back into audio, RoVo produces structured perturbations that are more tightly coupled with the acoustic structure of speech and are therefore harder to suppress through enhancement-based removal. To balance protection strength and perceptual quality, we further adopt a dynamic alternating optimization strategy that switches between speaker-identity disruption and quality-preservation objectives during perturbation generation. Extensive experiments show that RoVo achieves an average Defense Success Rate of 88.0\% across multiple voice cloning models, remains effective under weak, moderate, and strong adaptive attacks as well as in black-box transfer settings, and outperforms representative signal-domain defenses under moderate adaptive attacks. Despite a measurable quality trade-off, objective and human evaluations indicate that the protected speech remains usable while providing substantially more durable protection. These results highlight robustness against adaptive removal as a primary requirement for proactive voice defense.

Model Overview

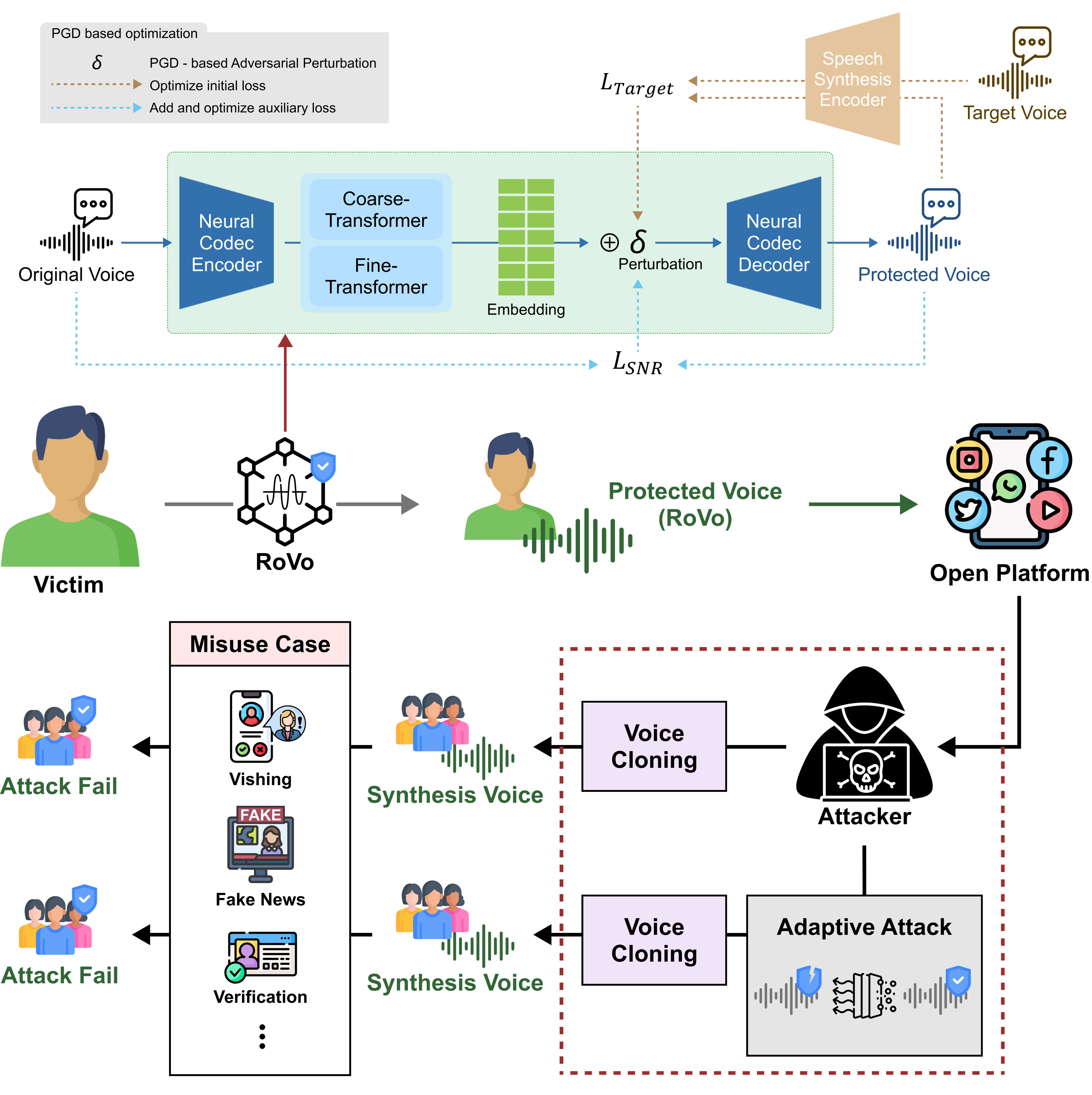

ㅤOverview of the proposed adversarial embedding defense mechanism. The system protects against unauthorized voice synthesis and downstream misuse such as vishing, fake news, and fraudulent verification, even in the presence of noise removal or speech enhancement techniques.

ㅤThe architecture of RoVo, the proposed voice protection framework. RoVo applies adversarial perturbations directly to the embedding space generated by the Neural Codec Encoder. The perturbed embeddings are processed by the Neural Codec Decoder to reconstruct the protected voice while maintaining naturalness.

AVC Samples

| Type | Real voice | Defense voice |

|---|---|---|

| Defended voice compared with real voice | ||

| Fake voice generated using defended audio | ||

| Defended voice after speech enhancement | ||

| Fake voice generated after speech enhancement |

AVC Samples

| Type | Real voice | Defense voice |

|---|---|---|

| Defended voice compared with real voice | ||

| Fake voice generated using defended audio | ||

| Defended voice after speech enhancement | ||

| Fake voice generated after speech enhancement |

RTVC Samples

| Type | Real voice | Defense voice |

|---|---|---|

| Defended voice compared with real voice | ||

| Fake voice generated using defended audio | ||

| Defended voice after speech enhancement | ||

| Fake voice generated after speech enhancement |

* Fake voice generated after speech enhancement demonstrates that the applied noise reduction and defense techniques successfully hindered the realistic generation of fake audio.

RTVC Samples

| Type | Real voice | Defense voice |

|---|---|---|

| Defended voice compared with real voice | ||

| Fake voice generated using defended audio | ||

| Defended voice after speech enhancement | ||

| Fake voice generated after speech enhancement |

YourTTS Samples

| Type | Real voice | Defense voice |

|---|---|---|

| Defended voice compared with real voice | ||

| Fake voice generated using defended audio | ||

| Defended voice after speech enhancement | ||

| Fake voice generated after speech enhancement |